단축키

Prev이전 문서

Next다음 문서

단축키

Prev이전 문서

Next다음 문서

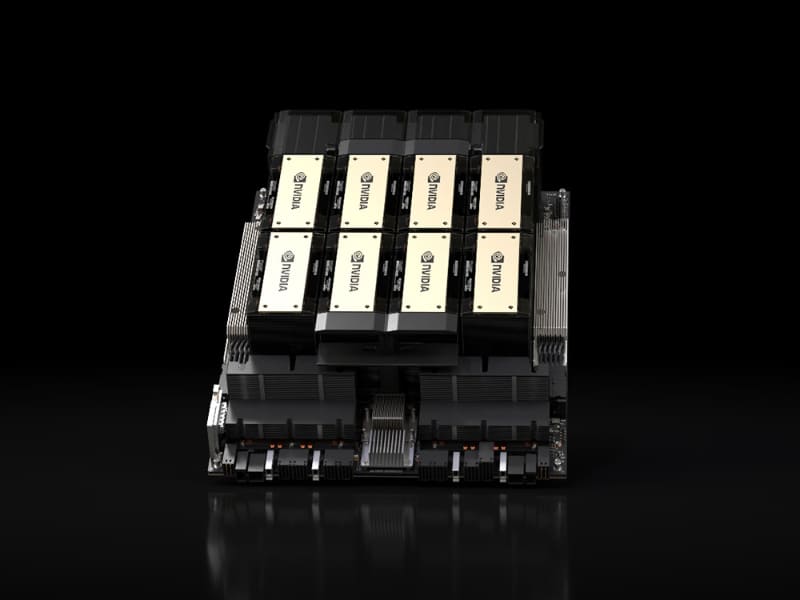

NVIDIA가 호퍼 아키텍처 GPU와 HBM3e 메모리를 탑재한 H200, 그리고 HGX H200을 발표했습니다.

메모리 대역폭은 4.8TB/s, 용량은 141GB로 H100보다 대역폭이 1.4배, 용량이 2배로 늘었습니다. 그래서 Llama2 70B는 1.9배, GPT-3 175B는 1.6배의 성능 향상이 있습니다.

SC23—NVIDIA today announced it has supercharged the world’s leading AI computing platform with the introduction of the NVIDIA HGX™ H200. Based on NVIDIA Hopper™ architecture, the platform features the NVIDIA H200 Tensor Core GPU with advanced memory to handle massive amounts of data for generative AI and high performance computing workloads.

The NVIDIA H200 is the first GPU to offer HBM3e — faster, larger memory to fuel the acceleration of generative AI and large language models, while advancing scientific computing for HPC workloads. With HBM3e, the NVIDIA H200 delivers 141GB of memory at 4.8 terabytes per second, nearly double the capacity and 2.4x more bandwidth compared with its predecessor, the NVIDIA A100.

H200-powered systems from the world’s leading server manufacturers and cloud service providers are expected to begin shipping in the second quarter of 2024.

“To create intelligence with generative AI and HPC applications, vast amounts of data must be efficiently processed at high speed using large, fast GPU memory,” said Ian Buck, vice president of hyperscale and HPC at NVIDIA. “With NVIDIA H200, the industry’s leading end-to-end AI supercomputing platform just got faster to solve some of the world’s most important challenges.”

Perpetual Innovation, Perpetual Performance Leaps

The NVIDIA Hopper architecture delivers an unprecedented performance leap over its predecessor and continues to raise the bar through ongoing software enhancements with H100, including the recent release of powerful open-source libraries like NVIDIA TensorRT™-LLM.

The introduction of H200 will lead to further performance leaps, including nearly doubling inference speed on Llama 2, a 70 billion-parameter LLM, compared to the H100. Additional performance leadership and improvements with H200 are expected with future software updates.

NVIDIA H200 Form Factors

NVIDIA H200 will be available in NVIDIA HGX H200 server boards with four- and eight-way configurations, which are compatible with both the hardware and software of HGX H100 systems. It is also available in the NVIDIA GH200 Grace Hopper™ Superchip with HBM3e, announced in August.

With these options, H200 can be deployed in every type of data center, including on premises, cloud, hybrid-cloud and edge. NVIDIA’s global ecosystem of partner server makers — including ASRock Rack, ASUS, Dell Technologies, Eviden, GIGABYTE, Hewlett Packard Enterprise, Ingrasys, Lenovo, QCT, Supermicro, Wistron and Wiwynn — can update their existing systems with an H200.

Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure will be among the first cloud service providers to deploy H200-based instances starting next year, in addition to CoreWeave, Lambda and Vultr.

Powered by NVIDIA NVLink™ and NVSwitch™ high-speed interconnects, HGX H200 provides the highest performance on various application workloads, including LLM training and inference for the largest models beyond 175 billion parameters.

An eight-way HGX H200 provides over 32 petaflops of FP8 deep learning compute and 1.1TB of aggregate high-bandwidth memory for the highest performance in generative AI and HPC applications.

When paired with NVIDIA Grace™ CPUs with an ultra-fast NVLink-C2C interconnect, the H200 creates the GH200 Grace Hopper Superchip with HBM3e — an integrated module designed to serve giant-scale HPC and AI applications.

Accelerate AI With NVIDIA Full-Stack Software

NVIDIA’s accelerated computing platform is supported by powerful software tools that enable developers and enterprises to build and accelerate production-ready applications from AI to HPC. This includes the NVIDIA AI Enterprise suite of software for workloads such as speech, recommender systems and hyperscale inference.

Availability

The NVIDIA H200 will be available from global system manufacturers and cloud service providers starting in the second quarter of 2024.

Watch Buck’s SC23 special address on Nov. 13 at 6 a.m. PT to learn more about the NVIDIA H200 Tensor Core GPU.

https://nvidianews.nvidia.com/news/nvidia-supercharges-hopper-the-worlds-leading-ai-computing-platform